BME 548 Final Project: Optimized Real-time Facial Expression Recognition Based on YOLOv8

Xiangjiang Bao Junfei Wang

xiangjiang.bao@duke.edu junfei.wang@duke.edu

| Paper PDF |

|

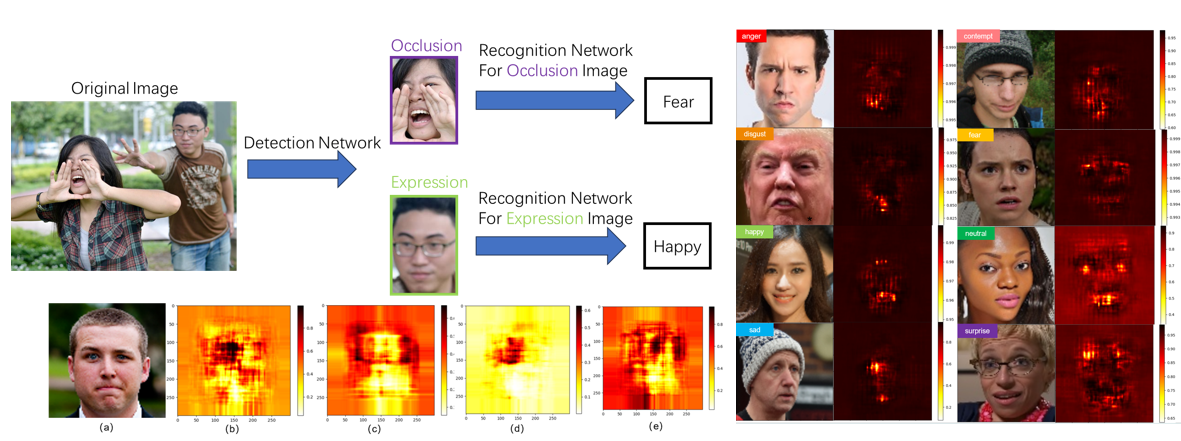

Facial expression recognition (FER) is a fundamental task in computer vision with applications in various fields in mental health monitoring and emotion analysis. Recent deep FER systems generally focus on two important issues: overfitting caused by a lack of sufficient training data and expression-unrelated variations, such as illumination, head pose, and identity bias. In this work, we divided the pipeline into primary two stages. A detection network based on YOLOv8 is implemented to extract the face for each individual in the image, and the faces are classified based on their appearance. A designated YOLOv8-cls classification model for each class is trained to make prediction of the expression. Three types of image degradation are simulated for data augmentation. Three physical layers are adopted to improve the stability to image degradation of the detection network, and further analysis of the results are made. At the end of our work, we achieve a system that can perform real-time FER at 11 fps.

|

| Paper: |

Code and Data:

|