Multispectral Semantic Segmentation for Autonomous Vehicles: Optimizing Detector Weights As A Function of Daytime and Visibility

Francesco Mastrocinque

fam21@duke.edu

| Paper PDF |

|

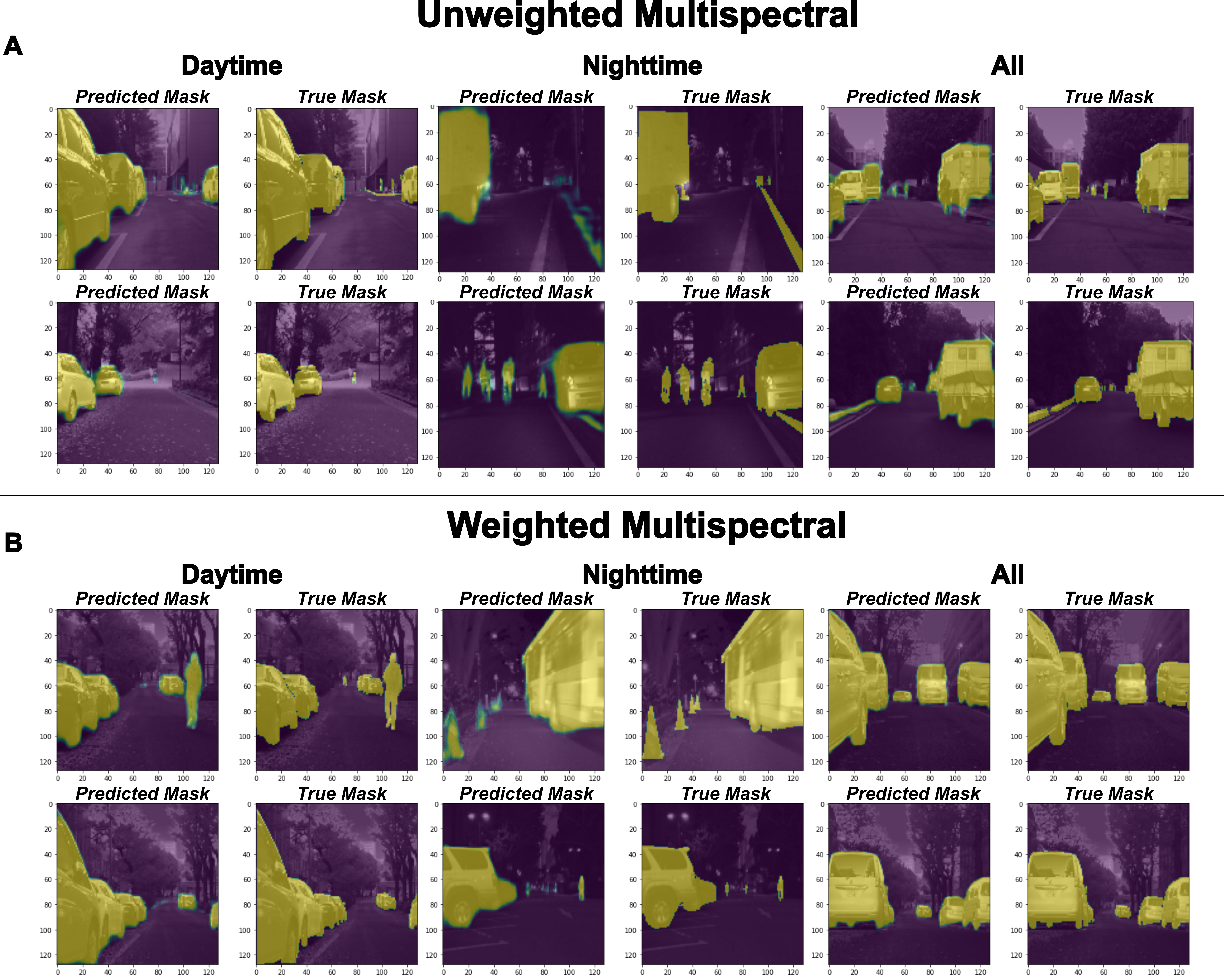

This work addresses the semantic segmentation of images of street scenes for autonomous vehicles using a RGB-Thermal dataset. An increasing interest in safe and functioning autonomous vehicles has lead to the adoption of image segmentation in this area. Research relating to autonomous vehicle semantic segmentation and object detection has traditionally relied on the use of RGB images acquired during times of poor visibility or adverse weather conditions, or through the use of expensive light detection and ranging (LIDAR) technology. This work focuses on the application of imaging techniques to improve the current state-of-the-art image segmentation models for autonomous vehicles by incorporating information obtained from both RGB and thermal-based input images. The results described herein show a drastic improvement in model image segmentation as identified through an average Sorensen-Dice coefficient when both RGB and thermal information were used across all times of day, as opposed to models trained using RGB or thermal-only channels. |

| Paper: |

| Dataset: Link To Dataset |